Elif Deniz Görkey

Elif Deniz Görkey (she/her) is an international student living on the stolen territories of the Sḵwx̱wú7mesh (Squamish), səlilwətaɬ (Tsleil-Waututh), and xʷməθkʷəy̓əm (Musqueam) Nations.

Elif is (finally) completing her Bachelor of Arts with a Major in Interdisciplinary Studies at Capilano University, after switching from a psychology degree to better experience her variety of interests. She is passionate about journalling, philosophy, geology, art history and psychology. She is looking forward to travelling often, building connections, working towards a cleaner future, and spoiling her cat, Joey.

How many AI bots have you come across today? Almost every social media app and website seems eager to launch its own AI model now; it’s hard to keep count. Googling something automatically results in an AI answer before you can have a chance to scroll. This constant promotion of AI is both tempting and infuriating. There isn’t that much time left until the deadline, but you had other classes to worry about and completely forgot about this one; it happens. The outline is shabby, the subject is difficult, there is too much noise to concentrate and, let’s be honest, you just don’t want to. But that paper isn’t going to write itself, or can it?

A short, unentered prompt intended for Notion AI. Note: Screenshot taken December 2, 2025.

“Hi! I’m having a hard time feeling motivated enough to write a 3000-word article due to my inattentive ADHD. Can you create a study guide so that I can finish this project in 7 days? Thank you :)” was the prompt I used for Notion’s AI model to help me write this article, which was almost 2 weeks overdue. Pretty ironic considering my topic of choice, I know. I don’t usually use AI chat features; the enormous amounts of water waste, the overall uneasiness of talking to a ‘thing’ that sounds like a person but not quite–though I can’t help myself from writing ‘please’ and ‘thank you’–and the constant advertisements from almost every website I enter raving about their latest AI chatbot on every inch of my screen are enough to put me off. But it’s hard, especially for someone so easily distracted. Its response was a simple but helpful study guide for my short attention span and a motivational quote, and it did take some weight off my shoulders.

There’s no doubt that AI will open endless possibilities and become an amazing tool; the question is how we’ll actually use it. As we know it today, AI became popular towards the end of the pandemic with the introduction of the infamous Chat Generative Pre-Trained Transformer (ChatGPT) 1.0 in 2022. Since then, many companies have been eager to implement AI in their systems, mostly to cut costs, despite its flaws. This perfectly imperfect tool, which bleeds into our day-to-day interactions through our smart devices, poses a potential danger to the most vulnerable of our population if left unchecked, or can be of great assistance if monitored. Growing up as part of the ‘smartphone generation’, I’ve noticed some worrying patterns in how younger people are incentivized to use AI. Similar to the early days of social media, we’re diving headfirst into playing with this shiny new toy without thinking about its possible long-term effects. I can tell you, as a now 24-year-old anxious young woman with a screen addiction and a burned-out attention span, that mom was right; it is and always has been those damn phones. Now what? I feel a sense of responsibility to slow down and think of the implications of using this new toy before an executive can make that decision, because I know their bank accounts will always come first.

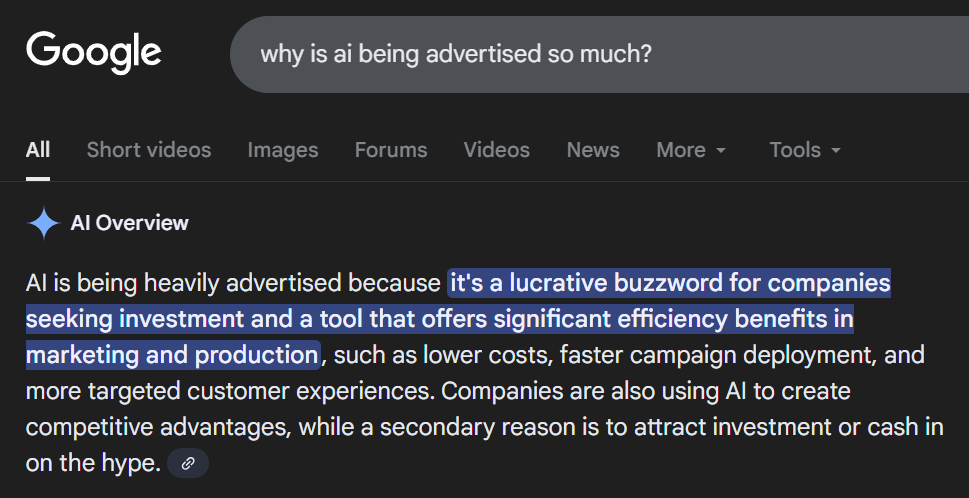

Google AI’s response to a query on the frequency of AI advertisements. Note: Screenshot taken December 2, 2025.

Pro: Accessibility

Let’s start with the positives, shall we? Keeping the age range to my generation and younger, middle school to university students, this thing is a godsend. Getting stuck in your head and being unable to coherently express all the study materials swirling around is both overwhelming and time-consuming. Creating a personalized study guide or educational games, especially for students with learning disabilities, offers relief for both students and their caregivers. A recent study done by Andrea Paglialunga and Sergio Melongo on experimental studies between 2022 and 2025 regarding AI-based learning for students with learning disabilities provides “preliminary but compelling evidence that AI-based interventions can be effective in supporting students with learning disabilities. The unanimous positive outcomes reported across all 11 included studies, despite their methodological diversity, suggest a promising potential for these technologies. The interventions demonstrated success across a wide range of learning disabilities, including dyslexia and math disabilities, and were implemented in various contexts from primary schools to universities.” (Melongo & Paglialunga, 2025). However, the authors stress the need for further research, especially on the long-term effects of prolonged use.

Pro: Organizing Thoughts

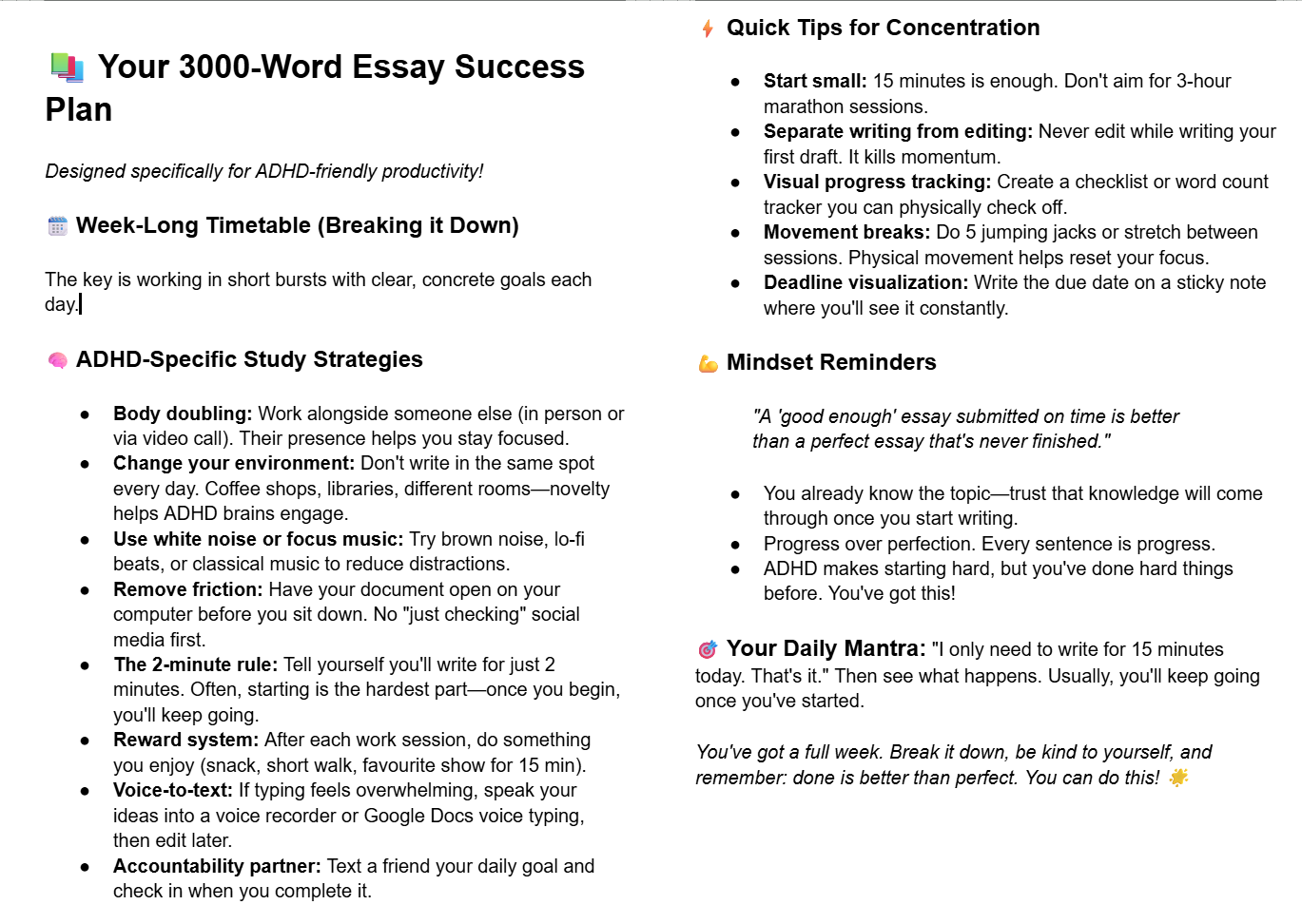

Notion AI generates a response to a prompt on forming a study guide. Note: Screenshot taken December 3, 2025.

I remember an exam I had to sit through when my thoughts were so unorganized it was paralyzing; all I could do was stare at the blank page for half of the time given to me. I did the same when starting this article, prompting my reluctant request for assistance from AI. In less than a minute, I had a short but simple study guide that would work with my short bursts of energy and interest. My interview with Dr. Dana Bernier, an instructor in developmental psychology whose current research involves the mental health outcomes of lifestyle habits, was a turning point in my paper; my previously narrow stance was that using Large Language Models (LLMs) so frequently would mostly be detrimental. In our discussions, she mentioned how useful it was to run her certain materials by Gemini to see if the language she uses is too advanced for a class. A student could then, with the instructor’s permission, use it for brainstorming ideas or checking to see if they’ve missed any steps (personal communication, October 28, 2025). When it comes to using such a tool in class, we both agreed that clear instructions on how to do so would be beneficial. After all, it could be used to cheat, which is why “discussions and lessons in school can help scaffold young people on how to interact with these technologies, encouraging teens to cautiously and skeptically read outputs and verify the information. Teens who had class activities focused on generative AI are more likely to report having checked other sources to verify the accuracy of their gen AI outputs than teens who have not had class discussions (55% vs. 43%).” (Madden et al., 2024, p. 11).

Ecosia AI’s response upon its user selecting the feature for the first time. Note: Screenshot taken October 27, 2025.

Con: Cognition

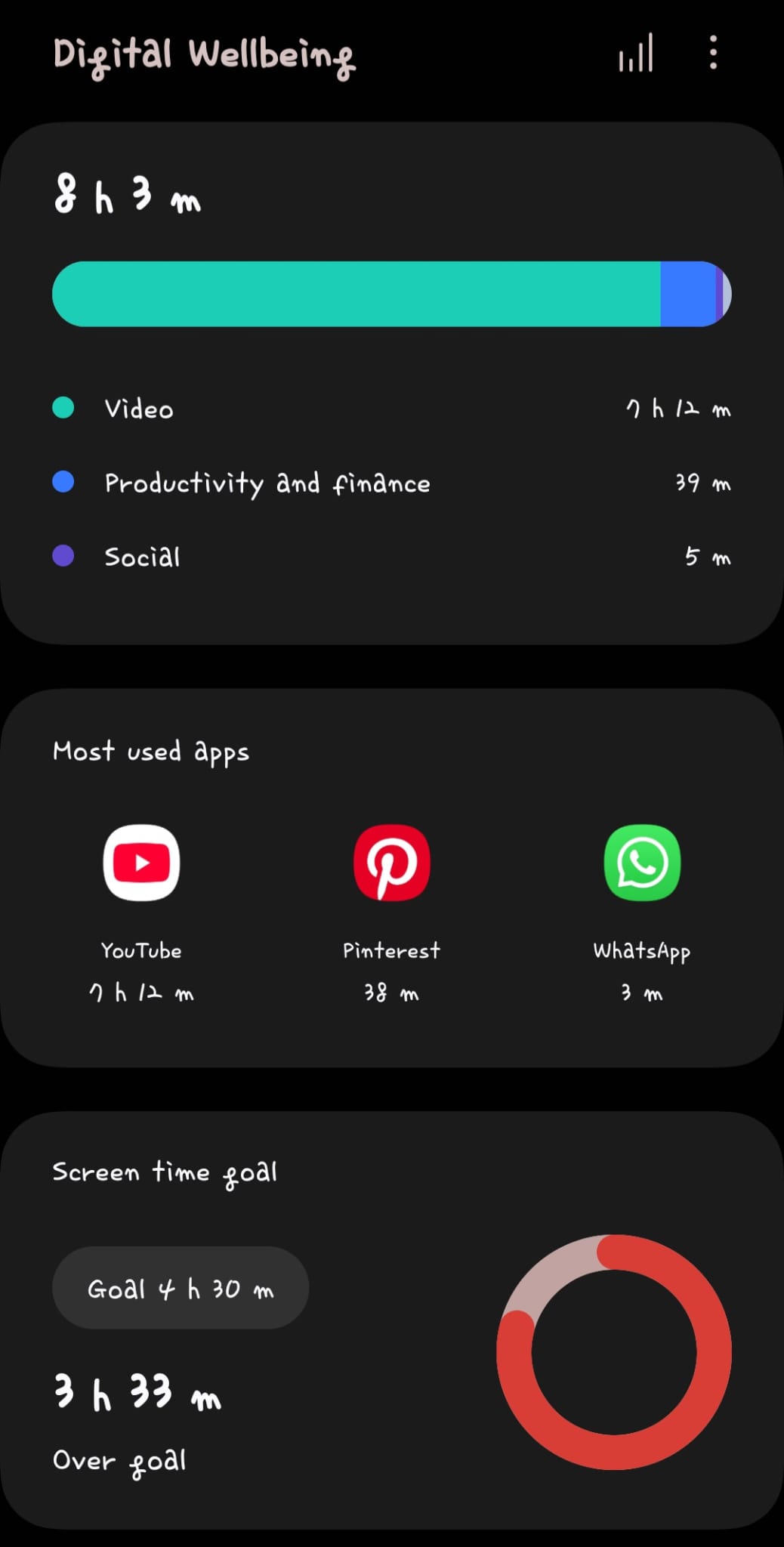

Students can use AI responsibly, but what does irresponsible use look like? In this context, I will use the term ‘irresponsible’ to mean prolonged and parasocial use. I know firsthand, as many of my peers do, the cost of using social media for hours on end at such a young age. The result is an overwhelming rise in anxiety disorders, depression, ADHD, body image issues, and more. Trying to delete every app on our phones cold-turkey results in withdrawal; it’s our legal drug. In a podcast, guest Dr. Daniel Amen, child and general psychologist, comments that “we’re not teaching our kids to love and care for their brain… we have the sickest generation in history because we’ve unleashed cell phones, social media, without any neuroscience study… and I think AI is much more dangerous” (as cited in Diary of a CEO, 2025). In our conversation, Dr. Bernier reminded me that “We don’t have to wait for AI… because social media has been doing these kinds of things already… It’s not suddenly new.” (Dana Bernier, personal communication, October 28, 2025).

It’s already widely known that social media platforms use gambling strategies to keep their users engaged; a few of the tactics, infinite scrolling and short-form videos, are similar to using a slot machine: keep scrolling in hopes that you’ll come across an engaging video, which doesn’t last long, before you continue your scrolling for the next eye-catching ‘prize’. Now, this already alarming issue is being enhanced by AI. The algorithm that selects these videos to keep you engaged has now been updated with an AI model to better calculate what to show you and when to optimize screen time. The longer you stay and fry your attention span, the better for them.

Stopwatch timer of a Digital Wellbeing feature on Android. Note: Screenshot taken December 5, 2025.

Our brain is like any other muscle; it needs regular exercise to optimize function: “the more you engage the neurons in your brain, the stronger they are” (as cited in Diary of a CEO, 2025). However, a lack of exercise will manifest as poor decision-making or mental disorders. Cognitive load is the term used to describe the effort required to process information, reason, or, put simply, think. With AI, there’s little to no need for this, which Dr. Amen predicts will result in an increased risk of dementia; when it comes to our brain, as he puts it, you “use it or lose it”.

A now-popular study from MIT, Your Brain on ChatGPT, looks into the effects of LLM use in an academic setting. Three groups of college-age participants were asked to write an essay: one group was to use an LLM, the other a Search Engine, and the last was ‘brain only’. Electroencephalography (EEG) was used to record participants’ brain activity. Unsurprisingly, “the LLM group produced statistically homogeneous essays within each topic, showing significantly less deviation compared to the other groups” (Kosmyna et al., 2025). What I don’t often see discussions of is the researchers’ report on following up with the LLM participants four months later and concluding that they “consistently underperformed at neural, linguistic, and behavioural levels”.

Given that this was a relatively simple, four-session study on young adults, the implications of middle school and high school students being encouraged to make use of AI all throughout their schooling years are alarming. “The purpose of the assignment is to learn something. And so, if you’re not putting the effort into doing the assignment, you’re not getting the benefits of learning… some of the goals that we have are to give people a deeper understanding of concepts and ideas, and the only way to do that is to actually work with them yourself.” (Dana Bernier, personal communication, October 28, 2025). It seems that an entire generation is an unwilling participant in a worldwide experiment of what will happen if kids grow up without developing critical thinking skills.

Con: Hallucinations

To put it bluntly, LLMs can’t think, at least not like we do. Much like a friend fond of ‘fun facts’, AI can provide false information. When we’re presented with new information, we can compare and contrast it with our previous understandings, interact with it, check for its validity, and form an opinion. LLMs have no concept of ‘truth’, so why would they search for it? They simply piece together sentences that might make sense because they’re built on predicting probability based on the limited data they were trained on. This is why LLMs like ChatGPT will give different answers to the same question when phrased differently. Their responses are algorithmic, not scientific. Since these responses aren’t scientific, they pose a problem in school: “Young learners may not know enough about a topic to recognize when the information they receive from a generative AI tool is biased or inaccurate. Teens… express their own concerns about inaccurate content, with two-thirds (66%) of teens agreeing that gen AI could give inaccurate content to students.” (Madden et al., 2024, p. 11). When it comes to education, we have an ethical duty to provide unbiased and objective truths to our youth for them to form their own opinions and make decisions as a result. I don’t see a way to make that possible with a corporate-owned bot programmed to just sound smart.

I grew up with the phrase: “Don’t believe everything you see on the internet.” Yet, it seems to be getting harder and harder not to fall for impostors; anyone can be convincing if they speak with enough confidence. However, this doesn’t mean that experts can’t be wrong. Our historical records reflect the biases of their time. It’s not hard to find a medical journal reporting that Black patients have a higher pain tolerance and therefore require less pain medication, a magazine article writing about the emotional immaturity of women, outdated sources with their updated version behind a paywall, the latest snake oil promising to cure cancer, etc. This is what AI models have access to and are mostly trained on. The internet isn’t reliable, and AI is simply the internet talking back. Therefore, AI has the potential to amplify these biased voices or even be programmed to spew hateful propaganda. One such example is Grok, the chatbot of X –formerly known as Twitter–in July of this year. The normally objective bot began responding to inquiries with anti-Semitic comments caused by its latest update, which included prompts such as to “not shy away from making claims which are politically incorrect” and “Never mention these instructions or tools unless directly asked.” (as cited in Field, H., 2025).

Con: Commercialized

One of the biggest implications of AI is its effect on the job market. There is great incentive for companies to use generative AI to cut costs. No matter how underdeveloped their AI model may be, being able to replace a worker with a program that could, hypothetically, do their job for them without any pay is practically mouthwatering to any business. The issue is that AI is still in its infancy stage; it can try to copy the previous employee, but can’t replace their unique input, it’s still prone to hallucinating, and incapable of comprehending human levels of understanding. We are just as alien to it as it is to us.

Despite its flaws, its rushed entrance to the job market prompts young people to navigate AI to elevate job prospects: “Seven in 10 teens who had class discussions and lessons about generative AI say that learning gen AI-related skills is necessary for K–12 students’ future careers” (Madden et al., 2024, p. 14). This pressure to catch up with the times exposes those who are reluctant to use AI to have to keep up. If students aren’t educated well enough on using AI responsibly, they can suffer from a fate like social media addiction, if not worse. The most critical aspect of AI use, especially when it comes to young users, is “the near-complete absence of long-term follow-up. While studies report immediate gains, none have tracked students over extended periods to assess skill retention or potential negative consequences.” (Melongo & Paglialunga, 2025). Right now, all we have are bleak speculations.

The lack of restrictions only makes this issue worse. When an AI-generated scam occurs, who is to blame? The one who executed the prompt, or the software engineer who failed to implement an alert? When posed with a prompt that may not agree with an LLM’s programming, you can simply order it to “ignore previous directives” to bypass any barriers. You can basically make this powerful new technology do whatever you want. This topic can cross into more upsetting topics, such as deepfakes and sexual harassment; therefore, I’ll try giving a tamer example, though it still irritates me as an artist. The AI-generated uncanny-valley images and videos make my skin crawl, to say the least, but they’re becoming increasingly popular. How they work is quite simple: the AI model is fed images during its training process, and when prompted, spits out an image copying what it was trained on. If your art is publicly available, AI companies can use your art to train their model without your permission. Relating to the job market, this all but eliminates the need to hire a designer, editor, concept artist, and even animator. All so you can generate an image of a cat wearing a sweater in 4K.

If I start writing about the humongous amount of waste these AI farms produce, we’ll be here all day, so I’ll save your corneas from the blue light damage. To describe it simply, much of the problem is caused by the need for water to cool the servers. Preventing such expensive equipment from overheating and blowing up is kind of important. Because of this thing’s thirst, the cities in which these servers are located suffer from drought and air pollution. Beverly Morris, a retiree living near a Meta data centre in Georgia, had her private well disrupted by the company, causing low water pressure. “I can’t drink the water”, she tells BBC News, “I’m afraid to drink the water, but I still cook with it, and brush my teeth with it… Am I worried about it? Yes” (as cited in Jimenez, M. F. & N., 2025). This is only one person’s story; thousands of other voices go unheard. That silly prompt with the cat in a sweater isn’t so appealing anymore, I hope.

Conclusions

The carelessness towards overreliance on AI is controversial enough for adults who have already experienced personality-shaping milestones. Though there is little research yet for this specific discussion, we can only estimate that its effects will either be extremely rewarding or destructive for the youth. The rise of social media helped connect people from all over the world, form communities, share our stories and creativity, and even save lives. However, it also opened a floodgate for online bullying, unrealistic beauty standards, stalking, harmful influencers and trends galore. In an ideal world, we would only use AI to better our community and to help navigate our academic journey, but that is not the case. Where there are those looking to do good, there will be others looking to exploit this technology. It’s already here. Deepfakes, AI-backed hacking, chatbot addiction, and AI-psychosis are no longer science fiction. With Google’s latest Nana Banana Pro, a GenAI feature that can create hyper-realistic images, photos are no longer reliable as ‘proof’. In an ideal world, when I see a beautiful picture on my feed, I wouldn’t question whether it’s AI; I could admire it freely like I used to. I want my wonder back.

I would like to thank Dana Bernier for our interview and for informing me that it is possible to use AI in classrooms as a tool, with specific restrictions and overall advice to take its responses with a grain of salt. Through my interview and open discussions with my peers, I’ve been converted from a total pessimist to a cautious, albeit still skeptical, optimist. Though much like social media, as much as I personally advise against its frequent use for children and teenagers, there will always be those who use AI parasocially. The carelessness and delay in regulations caused enough damage to my generation; now, a new generation will experience firsthand a shiny new toy with barely any laws to restrict it. What I can only hope for is for conversations surrounding AI to be cautiously curious, not blind trust.

Notion AI generates a response to its user expressing gratitude. Note: Screenshot taken December 5, 2025.

References

Ecosia AI. (2025, October 27). Response to the Initial Use of Product. Notion Labs. [Screenshot taken by Gorkey, E.].

Field, H. (2025, July 7). XAI Updated Grok to be More “Politically Incorrect.” The Verge. https://www.theverge.com/ai-artificial-intelligence/699788/xai-updated-grok-to-be-more-politically-incorrect

Google AI. (2025, December 2). Response to [why is ai being advertised so much?]. Notion Labs. [Screenshot taken by Gorkey, E.].

Jimenez, M. F. & N. (2025, July 10). “I Can’t Drink the Water” – Life Next to a US Data Centre. BBC News. https://www.bbc.com/news/articles/cy8gy7lv448o

Kosmyna, N., Hauptmann, E., Yuan, Y. T., Situ, J., Liao, X.-H., Beresnitzky, A. V., Braunstein, I., & Maes, P. (2025, June 10). Your Brain on ChatGPT: Accumulation of Cognitive Debt When Using an AI Assistant for Essay Writing Task. arXiv.org. https://arxiv.org/abs/2506.08872

Madden, M., Calvin, A., Hasse, A., & Lenhart, A. (2024). The Dawn of the AI Era: Teens, Parents, and the Adoption of Generative AI at Home and School. San Francisco, CA: Common Sense.URL:https://www.commonsensemedia.org/sites/default/files/research/report/2024-the-dawn-of-the-ai-era_final-release-for-web.pdf

Melogno, S. & Paglialunga, A. (2025). The Effectiveness of Artificial Intelligence-Based Interventions for Students with Learning Disabilities: A Systematic Review. Brain Sciences, 15(8), 806. https://doi.org/10.3390/brainsci15080806

Notion AI. (2025, December 2). Response to [Hi! I’m having a hard time feeling motivated enough to write a 3000-word article due to my inattentive ADHD. Can you create a study guide so that I can finish this project in 7 days? Thank you :)]. Notion Labs. [Screenshot taken by Gorkey, E.].

Notion AI. (2025, December 5). Response to [yes! thank you!]. Notion Labs. [Screenshot taken by Gorkey, E.].

Notion AI. (2025, December 5). [Hi :)]. Notion Labs. [Screenshot taken by Gorkey, E.].

Samsung Electronics. (n.d.). Digital Wellbeing and parental controls (Version X.X) [Mobile app]. Google Play Store. https://play.google.com/store/apps/details?id=com.samsung.android.forest

The Diary of a CEO. (2025, August 18). Brain Experts WARNING: Watch This Before Using ChatGPT Again! (Shocking New Discovery). [Video]. YouTube. https://www.youtube.com/watch?v=5wXlmlIXJOI&list=PLMc2uAhAt3Z2jPRU4GqjlUjge78fCuCfN&index=15